We all know that we should always close resp.Body in Go. You’re bound to see this snippet in most Go code.

|

|

I was intrigued by why we really need to do this, for a while now, I’ve known that the reason is that doing so frees up the connection and helps us reuse the connection for other requests internally. But like they say the detail is in the devil. I was trying to dig deeper into the net/http package to read and understand more around this. But of course, I deviated quite a bit. I noticed that eventhough I was firing multiple requests wireshark was only reporting 1 (YES ONE) TLS connection.

So I followed that track. I plan to come back to net/http sooner or later. But for now let’s first talk about HTTP, mostly about Keep Alive means and why it’s important.

Keep Alive Link to heading

HTTP persistent connection, also called HTTP keep-alive, or HTTP connection reuse, is the idea of using a single TCP connection to send and receive multiple HTTP requests/responses, as opposed to opening a new connection for every single request/response pair.

Simply said, instead of creating new connections each time, we reuse the existing connection as much as possible. This is beneficial because we get rid of the time taken for the TCP handshake for each consecutive call.

This is the base on which HTTP/2 was built and has been the default mode of operation since HTTP 1.1.

HTTP/2 Link to heading

Let’s say we need to make 100 calls to a given domain.

|

|

So if the server supports HTTP/2, that means we’ll only have a single TLS handshake (i.e. for the first request made) and the rest of the requests use the same connections. This is amazing since, TLS handshake is expensive in terms of latency.

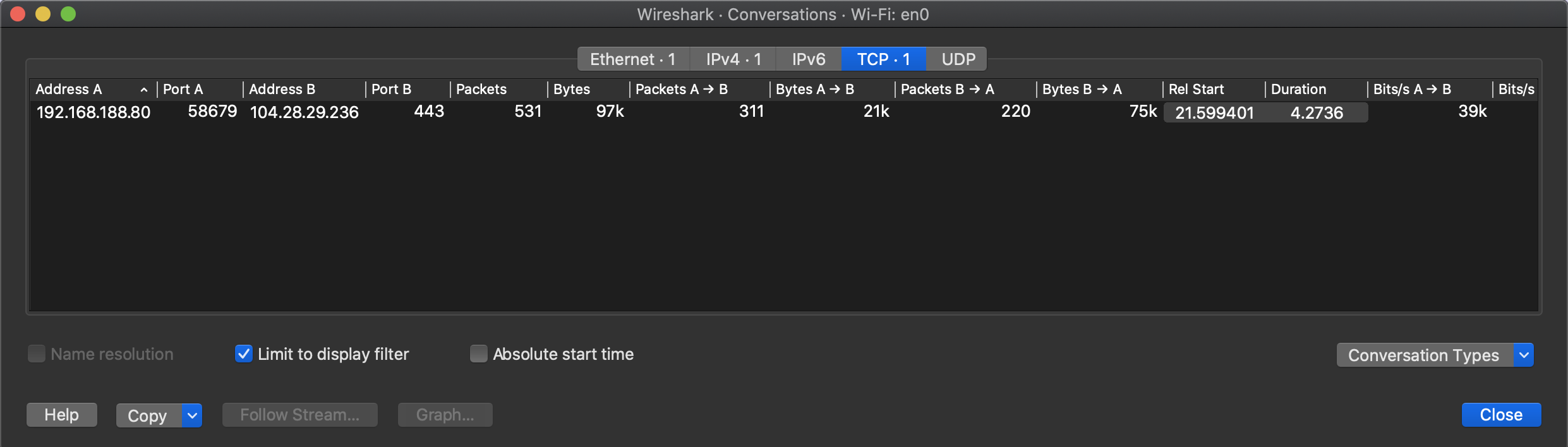

This is simply confirmed by tracking our TCP connections on wireshark.

I was curious would this still remain the same, if I ran them concurrently, would they still use the same TLS connection?

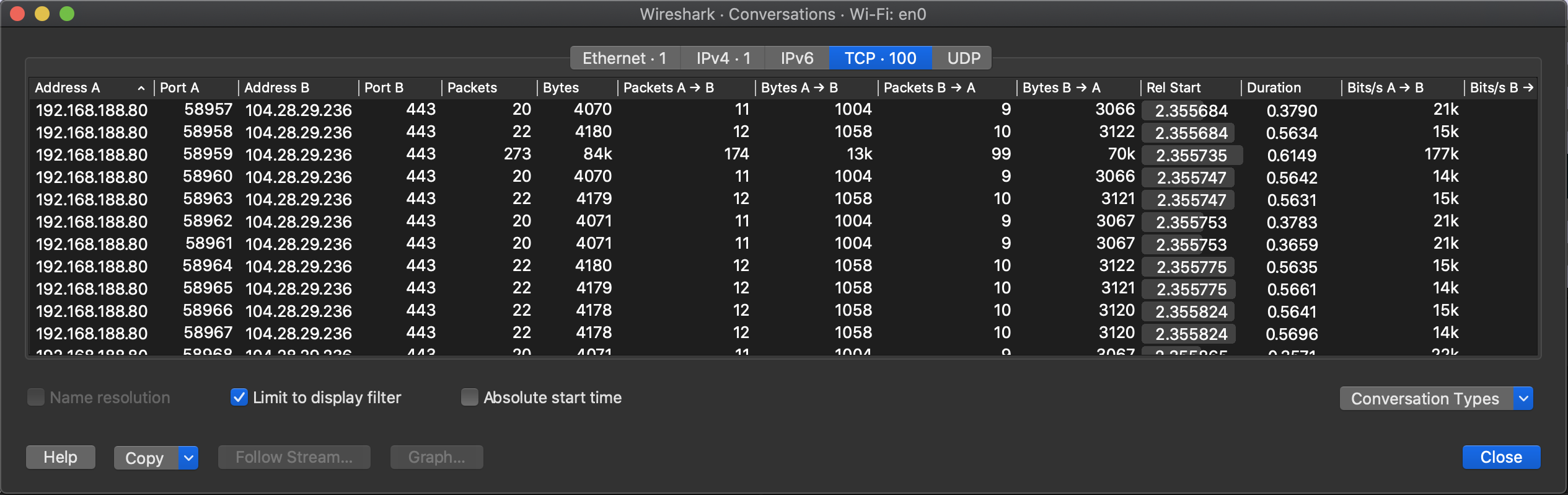

|

|

PSSS: Of course I used sync.WaitGroup in my test code.

Seems like the concurrency model in Go is a bit too fast it to detect that there is an existing TLS connection over which we can transmit new requests.

Also, I did try to amateurishly benchmark them, the concurrency model is much faster!!